There’s a pattern I’ve seen across every major technology shift in my career. A new capability drops. Everyone rushes to build demos. A few people quietly build infrastructure.

The demo builders get attention. The infrastructure builders get leverage.

Google just made a significant infrastructure move — and based on what I’m seeing in most GTM and ops conversations, people are treating it like a developer curiosity instead of what it actually is: a signal about where AI agent adoption is heading next.

Let me explain what’s happening and why it matters if you run a team, build products, or are trying to figure out where to actually place your bets with AI.

What Google Actually Launched

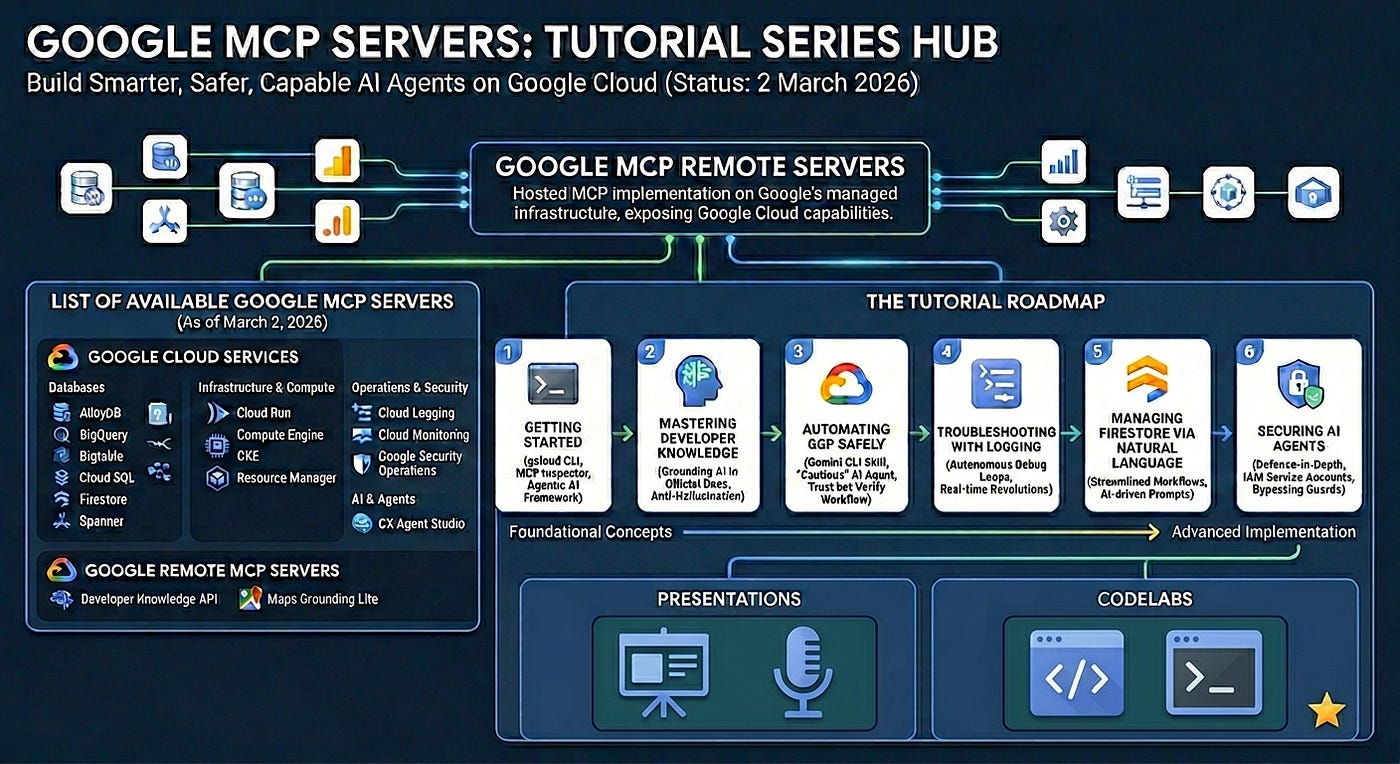

Google released a set of managed, remote MCP servers — Model Context Protocol integrations that let AI agents talk directly to Google Cloud services. We’re talking BigQuery, Firestore, Cloud Logging, Google Maps, Vertex AI Search, GKE, and more.

If that sounds overly technical, here’s the version that matters:

Your AI agents can now read from your databases, query your logs, pull from your analytics warehouse, and act on that data — without you building and maintaining the plumbing yourself. Google runs the infrastructure. You configure the connections. The agent does the work.

That’s a different world than what we’ve been operating in.

Most of the AI agent implementations I see today are brittle. Someone builds a workflow that calls an API, wraps it in a custom integration, hosts it somewhere, and prays it doesn’t break. There’s no standardization. There’s no security layer baked in. It works until it doesn’t, and then you’re six layers deep in debugging.

MCP changes that architecture. And Google hosting MCP servers on managed infrastructure — with IAM security, stateless scalability, and official documentation grounding — is the enterprise-grade shift that makes this practical at scale.

Why Most People Will Miss What This Signals

I’ve been in enough technology cycles to know how this plays out.

When something new drops, the people closest to the technology see the capability immediately. The people who actually transform their businesses with it are the ones who see the second-order effect — what this enables that wasn’t possible before.

Here’s the second-order effect nobody’s talking about:

Google didn’t just make it easier to connect AI to Google Cloud services. They built a standardized, secure, enterprise-ready interface between AI agents and live operational data. That’s the gap that’s been stalling real agentic deployment in serious organizations.

Think about the problems I hear from operators constantly:

“We built something with GPT, it worked in demos, but we can’t get it into production because security won’t approve it.”

“Our AI agent workflow is great until the data it needs lives in three different systems with no clean integration.”

“We can’t give AI agents write access to anything important because there’s no audit trail.”

Google’s architecture addresses all three. IAM Service Accounts as the security layer. Managed stateless infrastructure. Official documentation grounding via the Developer Knowledge MCP Server so agents aren’t hallucinating API syntax.

The foundation just got a lot sturdier.

What This Actually Looks Like in Practice

Keep reading with a 7-day free trial

Subscribe to GTM AI Podcast & Newsletter to keep reading this post and get 7 days of free access to the full post archives.