Agents Are Hands. The Knowledge Graph Is the Brain.

104 agents without a shared memory is 104 consultants in a Slack channel who never read each other’s messages.

That sentence has been sitting in the back of my head for six months. I’ve watched a lot of companies go wide on agents, proud of the count, proud of the range, proud of the “AI transformation” slide in the board deck. And then I ask what happens on a Tuesday morning when the marketing agent learns something important about a competitor, and the sales agent picks up a call with that same competitor an hour later. Nothing happens. The second agent starts cold. The first agent’s insight evaporates the moment the session ends. The count on the slide is a lie.

Revenue is engineered, not hoped for. The same thing is true for agentic systems. More agents don’t produce more intelligence if the underlying system has no memory.

What I Got Wrong The First Time

I’ll save you the lecture and lead with the confession. My first version of this system was genuinely embarrassing.

I had a folder of agent files. I had prompts I was proud of. I had a handful of skills wired into Claude Code. What I did not have was any way for those agents to know what any other agent had ever done, said, or learned. Every session started at zero. Every question got researched again. The CMO agent would produce a positioning brief on Monday. The sales enablement agent would ask the same positioning questions on Wednesday. I was paying in tokens and time for work I’d already done.

The deeper failure was that I couldn’t see it. The individual outputs looked great. Each agent, on its own, produced a useful artifact. It was only when I tried to chain them that the gaps showed up. A research agent would cite a stat. A writing agent would misquote it by 15%. A review agent would miss the misquote because it had never seen the original. Each agent was locally competent and globally incoherent.

That’s the failure mode nobody talks about when they show off their agent count. Agents that can’t share memory are not a system. They are a cast.

The Extended Analogy, Then The Twist

Most people think about agents like hiring. You’ve got a CMO, a CRO, a controller, a research analyst. Each one is a specialist. Each one has a job description. You build your org chart, you set goals, you run 1:1s, and the work gets done.

That framing is almost right, and the “almost” is the part that kills companies.

A real hire has shared context by default. They sit in the same meetings. They hear the same hallway conversations. They read the same Slack threads. They remember what happened last quarter because they lived through it. An agent has none of that. An agent is a contractor on their first day, every day, forever, unless you build the substrate that gives them continuity. Your org chart of agents is not 104 hires. It is 104 contractors walking into a building with no elevator. They can each do great work on floor 7. They just can’t get to floor 8.

The knowledge graph is the elevator.

Why Memory Is A Layer, Not A Feature

The Revenue Nervous System has six layers: Data, Intelligence, Context, Memory, Orchestration, Execution. I get asked all the time why Memory is a layer and not a capability inside Orchestration or Context. Because Memory is what makes the other layers cooperate.

Data without Memory is a warehouse that forgets yesterday. Intelligence without Memory is a model that re-derives the same pattern every morning. Context without Memory is a retrieval step that never learns which retrievals worked. Orchestration without Memory is routing that treats every request like it’s the first one. Execution without Memory is a writeback that nobody reads.

Memory is what turns a stack of cooperating services into a system that compounds. Without it, every run is independent. With it, the week after produces better answers than the week before, because the week before left a trace.

The shape of the fix is a knowledge graph with four layers that stay in sync whenever anything gets written. Visual, context, vector, temporal. Each answers a different question. Together they are the Memory Layer. Agents that can’t share memory are a cast. Agents that can share memory are a team.

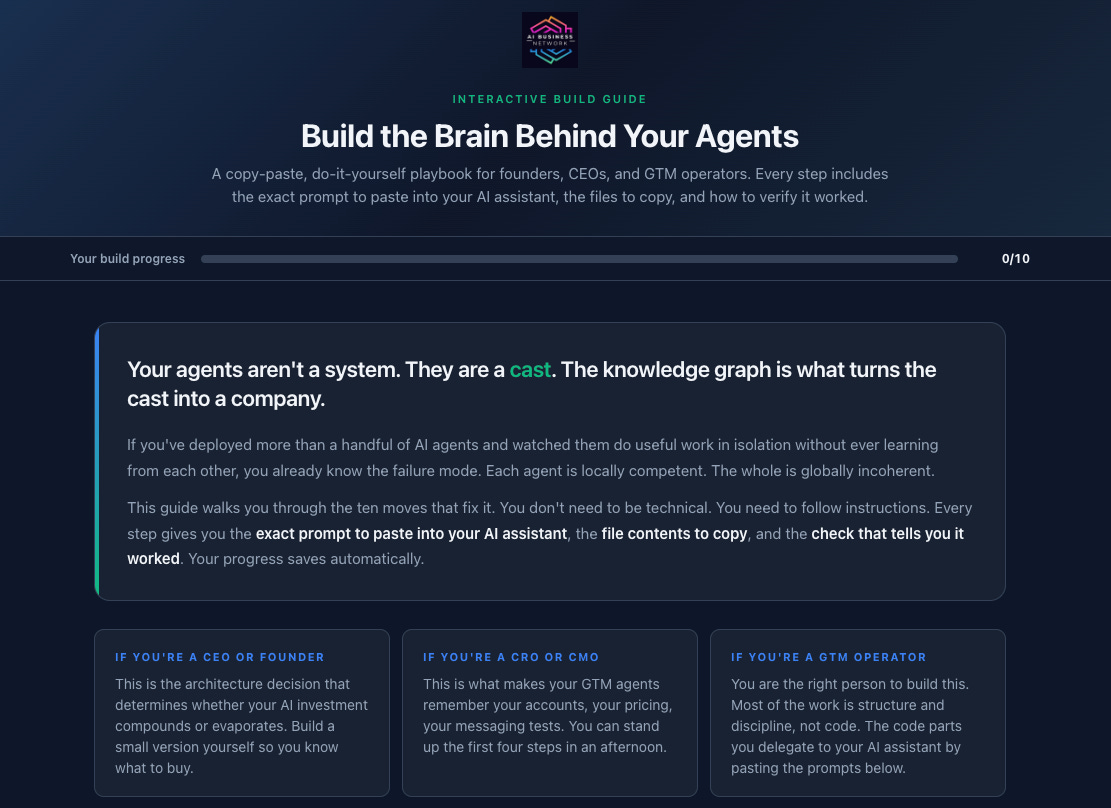

That is the framing. The next question is the only one that actually matters for operators: what does this look like on disk, what runs when, and how do you build it yourself without hiring a platform team.

How You Actually Build It

Keep reading with a 7-day free trial

Subscribe to GTM AI Podcast & Newsletter to keep reading this post and get 7 days of free access to the full post archives.